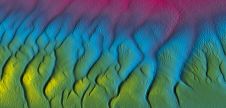

Rapidly Identifying Patterns and Problems in Multibeam Datasets

Improvements to Shallow-water Surveying

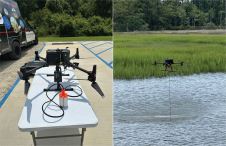

The Naval Oceanographic Office (NAVOCEANO) utilises a diverse suite of survey assets to collect high-resolution oceanographic and hydrographic data in shallow-water areas all over the globe. These assets maintain a high operation tempo, resulting in extremely large data volumes. The success of these data collection efforts depends on the quality of collection systems and the ability of personnel to rapidly identify and correct errors.

By Lawrence H. Haselmaier, Jr., Anna M. Manning and Matthew A. Thompson, Naval Oceanographic Office, USA.

The collection systems employed by NAVOCEANO are considered to be of the best in the industry, and the survey travel personnel employed by NAVOCEANO are both hardworking and innovative. Even with a combination of adequate equipment and a professional workforce, raw and processed data quality cannot be guaranteed in all instances because:

- Most NAVOCEANO surveys are multidisciplinary in nature, with one particular data type being the primary data collection focus. Optimising the survey manning complements by completely staffing with full-time hydrographic surveyors (in the case of a primary hydrographic collection) would be preferred but is not realistic for NAVOCEANO survey requirements.

- Surveying duties for seagoing personnel account for only a small portion of their overall employment responsibilities. The duties required on survey missions are complex and often differ significantly with respect to the duties required while in-house at NAVOCEANO. While the majority of the employees in the Hydrographic Department at NAVOCEANO are survey travellers, most focus neither on data collection nor on data processing on a daily basis while in-house. Maintaining expertise and proficiency with survey collection and processing is inherently challenging.

- Responsibilities of the survey party members typically end once the survey mission has concluded. Surveyors resume their in-house responsibilities immediately upon return to the office with no additional opportunity to finalise datasets prior to in-house production efforts. Survey datasets may not be ready for in-house production efforts once the crew disembarks the survey vessel.

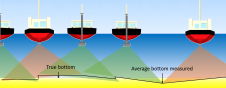

Because of these nuances of the NAVOCEANO survey structure, the Hydrographic Department observed recurrent problems with survey datasets, including deviations from the Survey Technical Specification documents, incorrect configuration biases, incorrect installation and runtime parameters for the multibeam sonar systems, and data cleanliness issues. Each of these factors was having a direct influence on hydrographic product delivery timelines, as in-house resources were required to address the issues (which in a handful of cases were unresolvable and required re-collection).

Monitoring Datasets

To serve in a more proactive capacity and to ensure Subject Matter Expert (SME) oversight on all hydrographic operations, the Hydrographic Department has developed tools and methods, leveraging NAVOCEANO’s commercial C/Ku Band data transfer capability, to provide SMEs the ability to monitor data during collection and to rapidly identify anomalies and troubleshoot issues to support the forward-deployed survey crew. The current capacity for data transfer of approximately 25 GB per day supports SME visibility on a representative sample of multibeam sonar Generic Sensor Format (GSF) data files.

To enable thorough and timely monitoring of those datasets, three general assumptions were made about multibeam data quality issues:

- most data are of good quality,

- most issues occur in patterns, and

- most cases of operator error are easily introduced.

Each of these assumptions led to specific design choices for the NAVOCEANO monitoring strategy. Along with these assumptions, other ground rules were established to promote the efficiency of data monitoring.

Focus on the problem areas: If most NAVOCEANO data are of good quality, data volume would not necessarily have as much significance as if data quality were indiscriminant throughout all datasets. Monitoring tools that rapidly identify and highlight issues virtually convert a large, amorphous dataset into a small, meaningful one.

Illuminate the trends: if patterns are present with most data issues, identification would become markedly easier, and fewer anomalies would go undetected. Output information from monitoring tools is organised to optimise efficacy of human review methods, making trends stand out more.

Automate wherever possible: If most operator error is simple to introduce, methods of mitigating and correcting it should minimise the introduction of further operator error. It is not sensible to employ tools that are more tedious to use or that require more human intervention than the erroneously configured systems they are attempting to examine.

Data Detective Services Suite

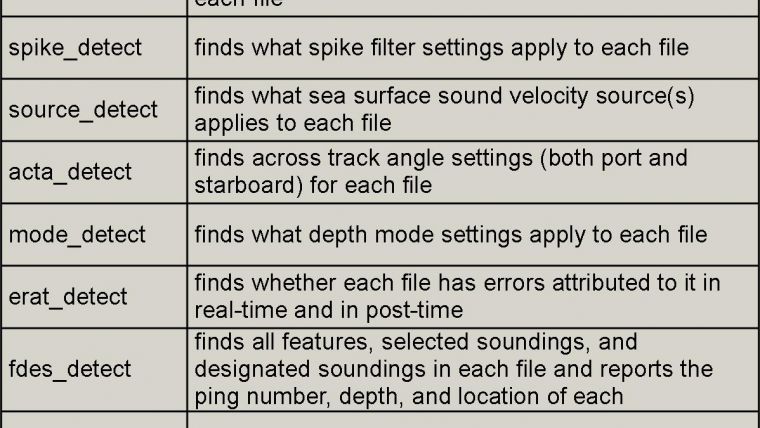

The first tools created for this monitoring effort were the Data Detective Services Suite (D2S2), a growing series of command line-based C programs to examine the contents of GSF files. Although several commercially available programs are able to examine GSF files, none were found that quickly and simply dissected those files to separate the problematic files and file portions (or files with particular selected parameters) from the files that do not meet those criteria. From early 2012 to the time of this writing, 29 modules were developed as part of D2S2 and address the areas of runtime parameters, ping and beam statuses, vessel speed, editing status, and waveform information, among others. The report formats are simple and text-based to maximise their universality and minimise the effort required to maintain the programs. D2S2 is currently employed on all Linux workstations used by the Hydrographic Department, both in-house and aboard NAVOCEANO vessels. A sample of the current list of programs is shown in Figure 1.

Data Correction Services Suite

The same considerations that led to the creation of D2S2 were later extended to include not only viewing multibeam GSF data but also performing basic corrections or attributions to those files when the situation merited it. Because of the sequential file structure of GSF data, several programs were created as part of the Data Corrective Services Suite (DCS2) by making small modifications to existing D2S2 programs. What’s more, the algorithms at the heart of DCS2 programs can often be easily adjusted to detect and modify another GSF parameter or condition. Example functions of DCS2 include removal of vertical correctors, removal of erroneous out-of-sequence data records, attribution of vertical datum information, and removal of specific types of beam edits, among others. As of this writing, there are seven DCS2 modules, each of which is mentioned in Figure 2. Like D2S2, DCS2 is employed on all Hydrographic Department Linux workstations.

Auto Monitor

The latest evolution of the monitoring effort has led to the consolidation of the most frequently used D2S2 tools into a single program with a unified output called ‘Auto Monitor’. Complementary to this development of Auto Monitor was the generation of a standard workbook, formatted to specifically receive this unified output and highlight those areas that deviate from Survey Technical Specification requirements, common settings, or pre-established thresholds. This method of organising the data allows monitoring personnel to see patterns that can aid in discovering the nature of a particular problem and considering potential solutions. While the original D2S2 programs addressed the challenge of analysing vast datasets, Auto Monitor further reduces the time required for issues to be discovered and resolved. The most individual D2S2 components used to derive the consolidated output from the Auto Monitor programme are shown in Figure 3.

The Hydrographic Department has been successful employing these methods to assure the quality of our shallow-water survey missions. Future development for these monitoring efforts will focus on an increased level of automation and linking common problematic data symptoms to known and established solutions.

Acknowledgements

The views expressed in this document are those of the authors and do not necessarily reflect the official policy or position of the Department of the Navy, Department of Defence, nor the US Government.

Value staying current with hydrography?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories from the world of hydrography to help you learn, grow, and navigate your field with confidence. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired by the latest in hydrographic technology and research.

Choose your newsletter(s)