Applying Side-scan Sonar and an AI-powered Platform for Environmental Protection

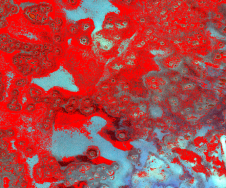

Over the past few years, the University of Delaware, USA, has participated in a state programme which supports rescuing abandoned crab pots from the seabed and researches technologies to make the search process more effective. It has developed advanced sonar surveys from a boat with further map storage and processing in SPH Engineering’s ATLAS AI-powered platform. The evaluations proved to be time-effective and more accurate in comparison to the detections of human annotators.

Blue crab fishing is a popular commercial and recreational activity throughout the United States. In order to catch these crabs, people use 'crab pots'. These devices are placed on the seafloor and tied to a float with a rope. The issue is that when people lose these items, they can stay on the seafloor for years, turning into deadly traps for fish and crabs.

AI-powered Image Processing and Object Detection

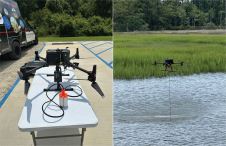

To explore the seafloor for lost crab pots, the University of Delaware conducted scanned data surveys with a high-frequency consumer side-scan sonar, synthesized the georeferenced images from the sonar data and analysed them with ATLAS, an AI-powered image processing, object detection and territory segmentation software by SPH Engineering.

The university produced a shareable web-map with located crab pots in ATLAS and a report with georeferenced spots in GeoJSON. This approach helped a detector to work much faster and more consistently than the human annotators to support the guided detection and recovery of ghost pots.

Arthur Trembanis, professor of oceanography at University of Delaware School of Marine Science and Policy, explained that with the threats that ghost pots present to the environment, it is critical to detect crab pots efficiently and completely in order to help guide clean-up efforts.

Processing in the Cloud

“Annotating side-scan sonar mosaics is very tedious and time consuming for human operators, especially when we have such a high abundance of targets and large areas to cover,” said Trembanis. “Our initial training and testing with ATLAS has been encouraging. The ATLAS interface was easy to use and within about 30 minutes of annotation, the system was able to train and then operate over our entire map domain.”

Trembanis added that the ability to have processing in the cloud with email updates was very beneficial and noted that the technical support team has been very responsive and helpful.

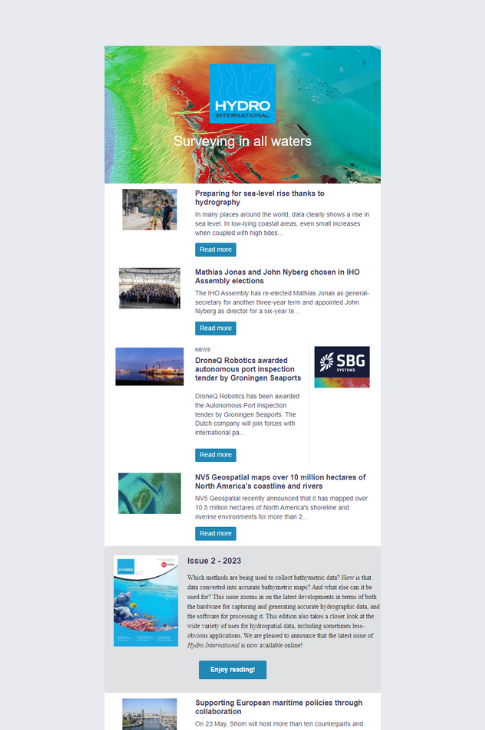

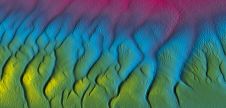

Seabed Bottom Types

“What took our human annotators several dozen hours to complete with manual annotations, ATLAS was able to complete in the cloud within about 30 minutes, and the results compare very positively to our expert annotations,” said Trembanis. “In fact, some of the ATLAS detections have made us re-evaluate human annotator detections for some possibly missed targets. Another aspect of ATLAS that is useful is that not only can we detect targets – ghost crab pots in this case – but we can also get ATLAS to recognize and delineate seabed bottom types (i.e. mud versus sand), which further helps us in understanding the importance of seabed habitat distribution in the region.”

Alexei Yankelevich, R&D director at SPH Engineering, said that SPH Engineering is: “Grateful to the University of Delaware for this project: it proves that ATLAS performs very well not only for ordinary photography but also for synthesized images from side-scan sonar data. The University of Delaware is a true pioneer in opening a door for a variety of subsurface inspections with AI-assisted data processing. In addition, I would like to say a special thanks to THURN Group Ltd (Norwich, UK), a provider of cutting-edge sonar products, for their expertise and assistance during this project.”

This project was supported by grant funding from Delaware Sea Grant, the School of Marine Science and Policy, and the NOAA Marine Debris Program. The sample dataset can be found here.