The Marine 2020s: Three Ambitious Global Projects

“In all beginnings dwells a magic force …”. Hermann Hesse’s famous verse seems to be particularly relevant to the coming decade when it comes to marine activities. Three ambitious global projects relating to the maritime domain will span the forthcoming 2020s. The broadest is no doubt the Decade of Ocean Science for Sustainable Development (2021-2030), spearheaded by the United Nations “to support efforts to reverse the cycle of decline in ocean health”. The second half of the proclamation “for Sustainable Development” seems to indicate two intentions: first, to link to the United Nations Sustainable Development Goals (2030), and second, to use ocean science to facilitate concrete measures for improvement.

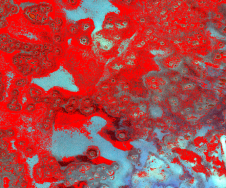

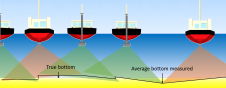

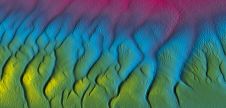

Ocean science has various facets – many of which require baseline information about the ocean’s seabed topography. Hydrographers know that, on a global scale, this data is fragmented and sparse. The global ocean map still presents many plain and empty blue fields. The joint Nippon Foundation – GEBCO Seabed 2030 project, with its ambition to fill the gaps within this decade, is therefore welcomed by the ocean science community. But where is the missing data to come from? Seabed 2030 is striving to be a ‘concentrator’ for all data that has been collected but not yet exploited. It also aims to induce effective new measurement technology. To begin, there remains a lot of work to gather data from a variety of sources. There are technical questions to resolve as to how these data stocks can be identified and brought together. However, a mental shift is also required. Many coastal states are still sceptical about supporting citizen science such as crowd-sourced bathymetry in their national waters. Commercial companies are not inclined to be ‘digital philanthropists’ and donate data which they had to pay for to obtain. And, with regards to scientists, perhaps the competitiveness of the field does not encourage data sharing.

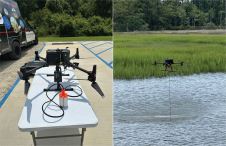

To overcome these challenges, all that can be done is to repeat positive arguments and to be ambitious by trying to generate new data in poorly surveyed areas. Currently routine surveys are mostly concentrated along the coastal shelf and are dominated by expensive hydroacoustic methods. However, emerging technology may change this. Satellite-derived bathymetry is definitely on the rise and swarms of unmanned survey launches may soon follow to increase the daily survey area coverage. New calculations recently presented at the Atlantic Seabed Mapping International Working Group Meeting indicate that a full survey of the Northern Atlantic with decent resolution would cost approximately US$90 million. While this is high, perhaps we should compare it to other endeavours. Would we have accepted leaving huge areas of the continents unsurveyed because of the cost? Certainly not. And how much did it cost to make images of the dark side of the moon?

The third project of note for the upcoming decade concerns the digital data framework. The IHO Council, at its recent session in autumn 2019, agreed that the next ten years would be the S-100 implementation decade. Some seem to believe that data engineering helps but does not constitute a relevant contribution to ocean science in itself. I regard this as a misconception. Progression in geomatics is driven nowadays by the merger of previously isolated information. New methods such as artificial intelligence and deep learning depend on it. A new phrase, ‘hydrospatial’ was recently coined at the Canadian Hydrographic Conference to express the three-dimensional interdisciplinary nature of new ocean-related datasets. These serve expanding groups of stakeholders and help reconcile maritime use and maritime preservation. The generic model of S-100 serves this holistic approach. If we want to be successful in our ambitions to save the oceans, all three of these campaigns are compelled to interact.

Value staying current with hydrography?

Stay on the map with our expertly curated newsletters.

We provide educational insights, industry updates, and inspiring stories from the world of hydrography to help you learn, grow, and navigate your field with confidence. Don't miss out - subscribe today and ensure you're always informed, educated, and inspired by the latest in hydrographic technology and research.

Choose your newsletter(s)